Sometimes I run into a description of intentionality that illustrates the topic far better than I ever could. This happened recently when … More

Tag: consciousness

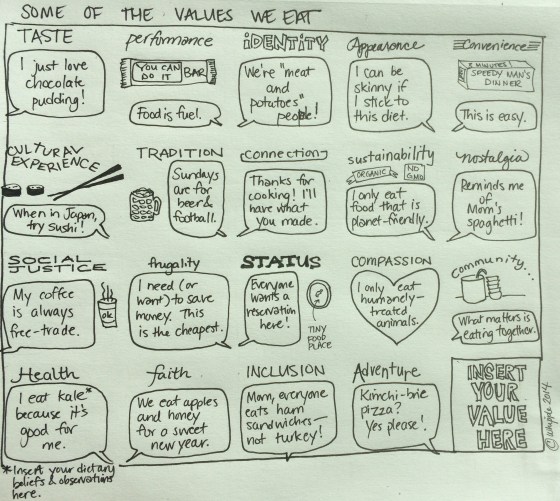

The Values You Eat

Between the Wedding Diet and my more recent approach to counting calories, I’ve obviously been thinking a lot about food. … More

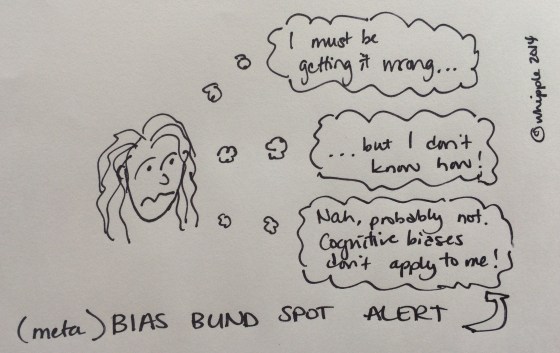

These Are a Few of My Favorite Biases

I often think that if we all become more aware of how we’re acting and choiceful about how we want … More

Are You Reacting To Life or Creating Your Life?

This week someone posed the question “Are you reacting to life or creating your life?” I liked the formulation and decided … More

Intentionality

Outside of Arenberg, Germany Why The Intentional? I’ll admit that at the beginning, my ambitions were far grander than a … More